The Journey of Neural Networks

The landscape of artificial intelligence has been transformed by neural networks, marking a profound shift in how machines learn and process information. What was once a simple concept has evolved into a complex system underpinning countless technologies we engage with today. This evolution traverses various stages, each pivotal in shaping the current capabilities of deep learning.

- 1950s: The inception of neural networks began with the birth of the Perceptron, created by Frank Rosenblatt. This foundational model was designed to mimic human brain functions, utilizing a single layer of neurons to recognize patterns. Although initially limited, it paved the way for further advancements and laid the groundwork for future models.

- 1980s: The introduction of backpropagation was a significant turning point in the realm of neural networks. This algorithm, which iteratively adjusts weights based on the error rate of predictions, allowed networks to learn much more effectively. Researchers such as Geoffrey Hinton, David Rumelhart, and Ronald J. Williams were pivotal in this breakthrough, enabling multi-layer networks to perform complex tasks.

- 2010s: The rise of Deep Learning during this decade marked a renaissance in the field. Utilizing multilayered networks, deep learning algorithms achieved unprecedented success in domains such as image recognition, where systems like Google’s DeepMind demonstrated the ability to recognize faces and objects with astonishing accuracy. This period also saw advancements in natural language processing, particularly with the development of models such as OpenAI’s GPT that revolutionized how machines understand and generate human language.

Each advancement has been driven by research breakthroughs and technological progress. From increased computing power to enhanced datasets, these developments have allowed neural networks to flourish. Today, we find neural networks in various applications, ranging from self-driving cars that leverage deep learning for real-time navigation, to personalized recommendations in streaming services like Netflix, which utilize algorithms to keep viewers engaged by suggesting content based on their previous viewing habits or user preferences.

The Impact of Neural Networks

The implications of this evolution are vast and continue to expand as new technologies emerge. In the field of healthcare, AI-driven diagnostics are transforming patient outcomes by aiding doctors in identifying diseases with greater accuracy and speed. For instance, imaging tools powered by neural networks can detect anomalies in radiology images that may be missed by the human eye.

In the finance sector, predictive analytics backed by neural networks are driving investment strategies by analyzing market trends and making forecasts about stock performance. Companies like Bloomberg have begun to implement these tools to provide traders with insights that can lead to more informed decisions.

Moreover, in the realm of entertainment, algorithms that curate personalized content are reshaping how audiences consume media. Spotify, for example, employs neural networks to analyze listener behavior, producing tailored playlists that resonate with individual tastes.

As we delve deeper into the evolution of neural networks, we uncover the fascinating journey that led to today’s sophisticated AI systems. This article will explore each phase and its significance in paving the way for future innovations, fundamentally changing our interaction with technology and the world around us.

EXPLORE MORE: Click here to dive deeper

The Foundations of Neural Networks

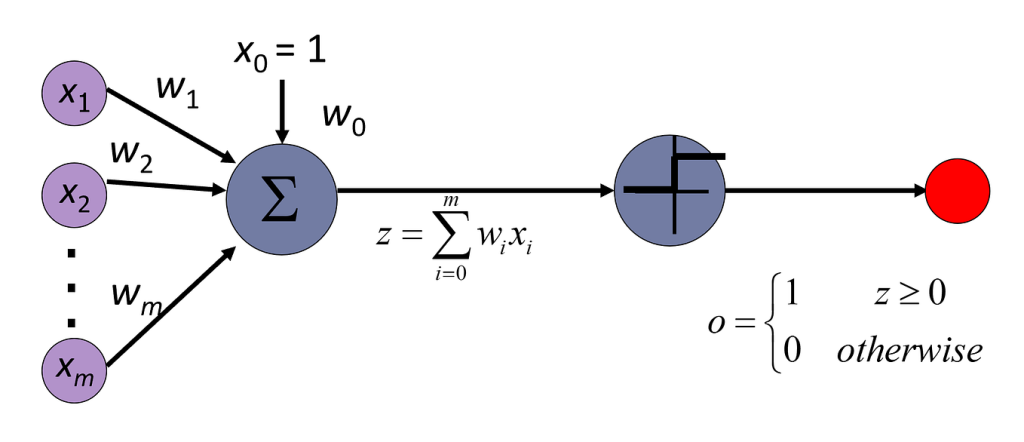

The early days of artificial intelligence saw the inception of the Perceptron, an innovative step towards mimicking biological neural behavior. Developed by Frank Rosenblatt in the late 1950s, this single-layer model aimed to classify input based on the weighted sum of its features, akin to how neurons in the brain supposedly operate. The Perceptron was capable of solving simple classification tasks, primarily distinguishing between two different sets of data, but it faced limitations, particularly in handling non-linear patterns.

The limitations of these early models became evident, prompting researchers to seek enhancements that could empower the next generation of neural networks. This search led to the crucial adoption of multi-layer perceptrons (MLPs), integrating multiple layers of neurons to facilitate more complex pattern recognition. However, the path was not easy; the Perceptron’s shortcomings were highlighted in 1969 by Marvin Minsky and Seymour Papert in their book “Perceptrons,” which outlined the model’s inability to solve problems requiring non-linear separability, such as the XOR problem.

The Renaissance: Backpropagation and Multi-Layer Networks

The 1980s marked a pivotal revival of interest in neural networks, thanks largely to the introduction of the backpropagation algorithm. This breakthrough, popularized by prominent researchers including Geoffrey Hinton, David Rumelhart, and Ronald J. Williams, allowed neural networks to efficiently learn from errors. By propagating errors backward through the layers, networks could adjust weights more effectively, thus enabling them to fine-tune their predictions and learn complex decision boundaries.

The significance of backpropagation cannot be overstated; it resurrected the field during a time when interest had waned, enabling researchers to build multi-layer neural networks capable of tackling far more challenging tasks. This era saw the successful implementation of these networks in several applications, including handwritten digit recognition and basic speech recognition, setting the stage for advancements in machine learning.

- Advantages of Backpropagation:

- Facilitated the training of deeper networks.

- Improved accuracy in predictions.

- Enabled the handling of more complex data sets.

- Emergence of Frameworks:

- Development of software libraries such as TensorFlow and PyTorch, streamlining neural network implementation.

- Increased access to high-performance computing resources, allowing broader experimentation with deeper architectures.

As computing capabilities evolved and data availability surged, the groundwork laid during this period proved invaluable. Researchers began to explore extensive deep learning frameworks in the following decade, realizing that with sufficient data and computational power, neural networks could unleash unprecedented capabilities. Innovations continued to surge, leading to a renaissance that would not only enhance recognition tasks in imagery, language processing, and more, but also profoundly influence daily technological interactions and applications.

In tracing the evolution from the Perceptron through to backpropagation and the rise of deep learning, it becomes clear that each advancement was crucial. They not only addressed previous limitations but also opened new avenues of research and development that would continue shaping the frontiers of artificial intelligence.

The Evolution of Neural Networks: From Perceptron to Deep Learning

The journey of neural networks has seen transformative milestones, each enhancing their capabilities and applications. Beginning with the basic Perceptron, developed by Frank Rosenblatt in the late 1950s, neural networks initially showcased their potential for simple binary classification tasks. The Perceptron laid the groundwork for modern neural networks, exhibiting foundational principles like weighted inputs and threshold functions. However, its limitations, especially with non-linear problems, paved the way for further advancements.

As the field progressed, scientists introduced multi-layer networks, or Multi-Layer Perceptrons (MLP), which enabled the networks to tackle complex functions. This advancement was heavily reliant on the backpropagation algorithm, introduced in the 1980s, which revolutionized training neural networks by updating weights through efficient error calculation. Consequently, researchers began to realize the potential of deeper networks, leading to the term Deep Learning and the exploration of architectures with numerous hidden layers.

Modern Deep Learning encompasses not only MLPs but also sophisticated architectures like Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs). CNNs have transformed visual recognition tasks, such as image and video analysis, while RNNs effectively handle sequential data, such as text and audio. This evolution indicates a significant shift from traditional algorithms to self-learning models that mimic human cognition and perception.

| Category | Advantages |

|---|---|

| Scalability | Neural networks can effectively scale with larger datasets, improving accuracy and performance. |

| Versatility | They can be applied across various domains, from image processing to natural language processing. |

The importance of training data cannot be overstated in the realm of neural networks. The evolution from simple models to complex deep learning algorithms highlights the growing need for vast amounts of high-quality data to achieve accurate and significant results. Furthermore, advancements in computing power, particularly with the advent of GPUs, have accelerated the training processes, making neural networks more accessible for research and commercial applications.

As we delve deeper into the capabilities of neural networks, it’s crucial to recognize the ethical implications these technologies introduce. Issues surrounding bias in training data, transparency in decision-making, and the potential for misuse must be considered as we continue to evolve and enhance neural networks further.

DISCOVER MORE: Click here to learn about the impact of unstructured data on AI

The Rise of Deep Learning and its Impact

The 21st century ushered in a transformative period for neural networks characterized by the advent of deep learning, a subfield that relies on neural networks with numerous layers—referred to as deep neural networks (DNNs). Unlike traditional neural networks, these DNNs can learn hierarchical feature representations, allowing them to automatically extract relevant information from raw data. This shift has revolutionized various fields, enabling significant progress in areas such as computer vision, natural language processing, and even game playing.

The surge in deep learning popularity can be traced back to a few key developments. The creation of large labeled data sets, like ImageNet in 2009, provided a treasure trove for training robust neural networks on tasks such as image classification. Coupled with the increased computational power from GPUs (Graphics Processing Units), which allowed for faster training of complex models, deep learning started to gain traction among researchers and enthusiasts alike.

Transformative Algorithms and Architectures

As the deep learning landscape expanded, numerous architectures emerged that drastically improved the capabilities of neural networks. Among these was the introduction of Convolutional Neural Networks (CNNs), which proved exceptionally adept at identifying patterns in spatial data. CNNs have been instrumental in powering modern image recognition technologies, such as facial recognition systems and automated medical diagnostics. Their ability to recognize patterns hierarchically—starting from edges and textures all the way up to complex objects—allowed for unprecedented accuracy levels that surpass human capabilities in certain domains.

Another significant development came with the advent of Recurrent Neural Networks (RNNs), designed for sequential data processing. These networks proved to be game-changers in natural language processing tasks, allowing machines to understand and generate human language with remarkable fluency. Techniques such as Long Short-Term Memory (LSTM) networks further enhanced RNNs by effectively managing the issues of long-term dependencies, making them suitable for a plethora of applications including machine translation and sentiment analysis.

- Notable Advances in Other Domains:

- Generative Adversarial Networks (GANs): Introduced by Ian Goodfellow et al. in 2014, GANs opened new frontiers in generating realistic images and augmenting datasets.

- Transformers: Revolutionized NLP tasks by processing entire input sequences simultaneously, laying the foundation for powerful models like BERT and GPT.

- Applications in the Real World:

- Healthcare: Deep learning has been utilized in diagnostics through image analysis of x-rays and MRIs, predicting disease outbreaks, and personalizing treatment plans.

- Autonomous Vehicles: The combination of CNNs and RNNs has led to breakthroughs in self-driving technology, enabling vehicles to interpret surroundings and make real-time decisions.

The migration from simple perceptrons to complex deep learning architectures illustrates a fascinating journey fueled by innovation and necessity. Each leap in understanding and technology has widened the scope of what is achievable with neural networks, influencing not only the field of artificial intelligence but also profoundly impacting everyday life. Whether it’s voice-activated personal assistants, smart recommendation systems, or next-generation robotics, the ripples of deep learning continue to expand into all corners of modern existence, leaving an indelible mark on the technological landscape.

DISCOVER MORE: Click here to dive deeper

Conclusion: Charting a New Course in Artificial Intelligence

The evolution of neural networks from the foundational perceptron to the sophisticated architectures of deep learning has been nothing short of remarkable. This journey illustrates how initial concepts, once deemed simplistic, have paved the way for a technological revolution that drives countless innovations today. By harnessing the power of deep neural networks with their multi-layer capabilities, researchers have been able to address increasingly complex problems, leading to breakthroughs in diverse fields such as computer vision, natural language processing, and unmanned systems.

As we navigate through this dynamic landscape, it’s clear that the contributions of advanced models—ranging from Convolutional Neural Networks (CNNs) to Generative Adversarial Networks (GANs)—have redefined our understanding of machine intelligence and its applications. The rise of transformers in NLP has further demonstrated that the potential of neural networks is only beginning to be explored, paving the way for future innovations that could revolutionize how we interact with technology.

Looking ahead, the integration of these robust algorithms into our daily lives signals a paradigm shift that encourages further investigation into the ethical implications and future capabilities of artificial intelligence. As we stand on the cusp of a new era in AI, understanding the historical context of neural networks will be crucial for anyone wishing to engage with the technology shaping our future. This transformative journey continues, and it urges enthusiasts, researchers, and the general public alike to keep pace with unfolding discoveries that promise to alter the fabric of society.