A Deep Dive into Neural Network Evolution

The evolution of neural networks is a compelling story of technological advancement that mirrors the broader narrative of artificial intelligence itself. At the heart of this journey is the perceptron, introduced by Frank Rosenblatt in 1958. This pioneering algorithm was designed to mimic the activity of neurons, forming the bedrock of neural computation. The perceptron made significant strides in supervised learning by allowing machines to classify data through a learning process akin to human decision-making.

As the years progressed, the landscape of neural networks transformed dramatically. The limitations of the perceptron became apparent, particularly with its inability to solve non-linear problems, which led researchers to seek more complex architectures. The introduction of the backpropagation algorithm in 1986 marked a significant turning point. This refinement made it possible for multilayer networks, or what we now refer to as deep learning models, to learn from errors effectively. A classic example of backpropagation in action is the training of neural networks for image recognition tasks, where layers of neurons adjust based on their previous misclassifications.

The watershed moment for neural networks came in 2012 with the debut of AlexNet at the ImageNet competition. AlexNet demonstrated the unparalleled performance of deep convolutional networks, dramatically reducing the error rates in image classification. This breakthrough not only positioned deep learning at the forefront of artificial intelligence research but also opened the floodgates for applications across various sectors. Companies like Google and Facebook began integrating these techniques to enhance their algorithms, affecting everything from search engine optimization to facial recognition.

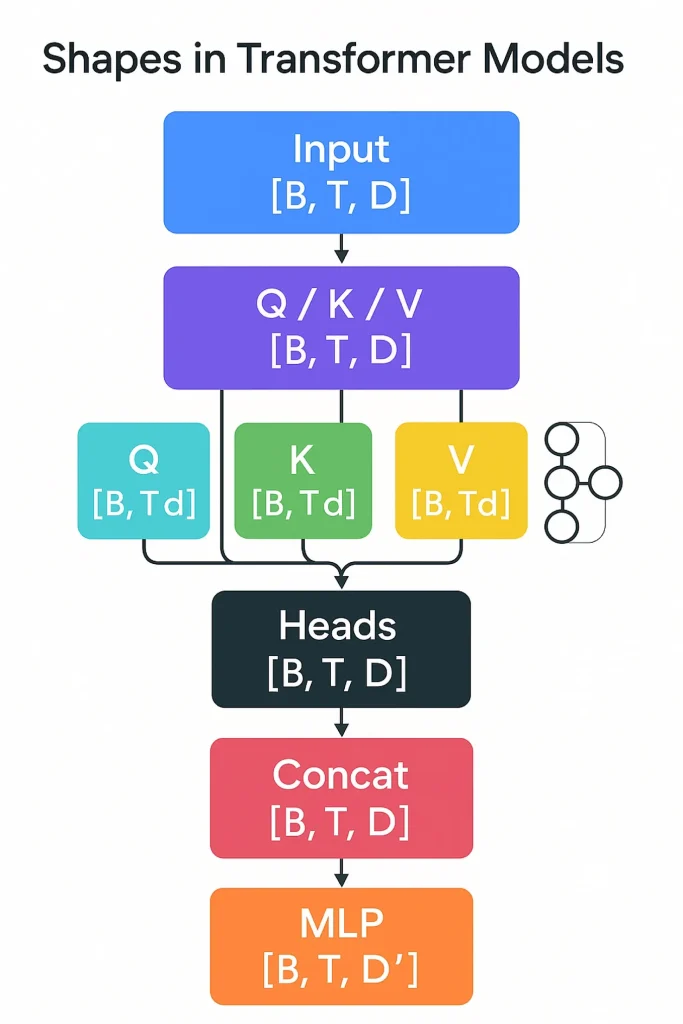

Fast forward to the present day, and we find ourselves enveloped in an age dominated by transformer architectures. These models have transcended traditional neural networks, excelling particularly in natural language processing tasks. Innovations like BERT and GPT-3 illustrate the transformer’s capability to understand context and generate human-like text. The implications are profound—think of AI-driven chatbots that engage in lifelike conversations or translation services that seamlessly convert languages while capturing the essence of the original text.

As we stand on the precipice of further advancements, the potential of neural networks extends far beyond theoretical possibilities. They are currently reshaping industries such as healthcare, where predictive analytics powered by neural networks can lead to early diagnosis of diseases, and finance, where automated trading systems leverage these models to attain a competitive edge. The integration of such technology exemplifies their role in addressing complex challenges, paving the way for innovations we have yet to imagine.

This chronological exploration invites us to contemplate the future of deep learning and the possibilities that lie ahead. With rapid advancements occurring on a regular basis, it is captivating to foresee the next wave of developments in neural networks. What applications or breakthroughs could emerge next? Researchers and tech enthusiasts alike eagerly await the next chapter in this remarkable journey.

DISCOVER MORE: Click here to learn about the importance of data cleaning in AI accuracy.

The Journey from Simple Structures to Complex Models

The evolution of neural networks has been characterized by continual innovation, reflecting the growing demand for smarter systems in various domains. At the core of this transformation lie several key developments that paved the way for modern deep learning. To understand this journey, it’s vital to explore how each milestone contributed to the robust architectures we see today.

Initially, the perceptron was the first step in achieving machine learning capabilities. It featured a straightforward architecture consisting of input nodes, weights, an activation function, and an output node. Despite its significance, the perceptron had inherent limitations, notably its struggle to solve complex problems that were not linearly separable. This realization ushered in an era of enhanced algorithms and multi-layered models.

In the decade following the perceptron, research took a detour. Interest in neural networks waned during the 1970s and 1980s, often referred to as the “AI winter.” However, the introduction of the backpropagation algorithm reignited excitement in 1986. This algorithm allowed neural networks to adjust weights across multiple layers by calculating the gradient of the loss function, enabling the feat of learning complex patterns akin to those observed in human cognition.

Some critical aspects of the backpropagation algorithm include:

- Layer-wise Training: Each layer of neurons works collaboratively, refining their weights based on the errors from the final output.

- Non-Linear Activation Functions: The addition of functions like ReLU (Rectified Linear Unit) allowed networks to model complicated relationships, expanding their potential applications.

- Scalability: Backpropagation enabled the development of deeper architectures, which laid the groundwork for the rise of deep learning.

The 1990s and early 2000s witnessed further enhancements with the introduction of Convolutional Neural Networks (CNNs) and other specialized architectures. Key advancements, such as dropout regularization techniques and advancements in hardware, played a pivotal role in enabling networks to function efficiently. By employing convolutional layers, CNNs could extract features from images, facilitating breakthroughs in computer vision and leading to stunning improvements in performance benchmarks.

As neural networks continued to gain momentum, the field became multidisciplinary, attracting attention from various sectors including automotive, healthcare, and entertainment. The shift from shallow networks to deep architectures showcased the adaptability of neural networks, as they began eclipsing traditional models in tasks like speech recognition and machine translation.

In hindsight, each phase in the evolution of neural networks not only improved computational abilities but also opened up entirely new fields of research and applications. As these advancements became foundational, they gradually filled the gap between theoretical potential and practical implementation, leading us to the sophisticated deep learning systems that we rely on today. The subsequent sections will delve deeper into these developments, tracing how the innovations have shaped the capabilities of neural networks in unpredictable and exciting ways.

The Evolution of Neural Networks: From Perceptrons to Deep Learning

As we journey through the remarkable history of neural networks, we uncover the pivotal advancements that have transformed artificial intelligence and machine learning. Beginning with the inception of the perceptron in the 1950s, we see the foundation laid for what would eventually evolve into sophisticated deep learning models. The perceptron, developed by Frank Rosenblatt, was a groundbreaking linear classifier that ignited interest in the mathematical formalism behind neurons and their functions. However, limited by its inability to solve non-linear problems, this early model sparked a quest for greater complexity and capability in neural architectures.

In the subsequent decades, neurons were conceptualized in a more intricate manner, leading to multi-layer perceptrons (MLPs) that utilized hidden layers of nodes. These advancements were crucial in tackling complex tasks by enabling the learning of intricate mappings from inputs to outputs. This increased capacity allowed networks to approximate any continuous function, significantly broadening their application range. The importance of backpropagation, introduced in the 1980s, cannot be overstated as it earned MLPs the ability to learn from their errors. This algorithm efficiency enhanced training procedures, establishing a critical step forward.

Fast forward to the introduction of the convolutional neural networks (CNNs) in the 1990s, primarily influenced by image processing tasks. CNNs revolutionized the field, utilizing specialized layers that effectively learned spatial hierarchies in data. This innovation has led to their widespread adoption across various domains such as computer vision, natural language processing, and even gaming.

| Category | Description |

|---|---|

| Advancements in Learning Techniques | Innovations like backpropagation have drastically improved training efficiency. |

| Deep Learning Models | Models like CNNs and RNNs enabled breakthroughs in tasks such as image recognition and language processing. |

With the development of algorithms capable of handling vast datasets, the era of deep learning began, characterized by architectures such as deep belief networks and recurrent neural networks (RNNs). This new phase allowed for the creation of models that excel in understanding sequential data, making it viable to delve deeper into complex tasks, from real-time language translation to predictive analytics in various industries.

The convolution of advances in hardware, algorithms, and extensive datasets has brought us to a point where neural networks play a pivotal role in our everyday lives, often without our conscious awareness. As we explore further into this evolution, it becomes evident that understanding the chronology of these developments not only illustrates the growing sophistication of neural networks but also emphasizes their vast potential in reshaping technology and society as a whole.

DISCOVER MORE: Click here to learn about privacy and security in AI data processing

Unleashing the Power of Deep Learning

As the understanding and capabilities of neural networks expanded, the field witnessed the advent of a new paradigm: deep learning. While traditional neural networks relied on a few hidden layers, deep learning architectures, characterized by numerous layers, began to reveal unprecedented performance in a variety of tasks. This shift from shallow to deep networks revolutionized fields such as natural language processing and computer vision, showcasing the true potential of neural networks.

The introduction of Deep Belief Networks (DBNs) and Autoencoders in the late 2000s marked a significant progression in unsupervised learning methods. DBNs utilized generative models to learn hierarchies of features, enabling the extraction of synonymous relationships between data elements. Autoencoders, on the other hand, were specifically designed to create compressed representations of data, opening up new avenues for anomaly detection and data denoising. These innovations paved the way for pivotal applications including image and speech recognition, which began achieving human-level performance.

In 2012, the landscape of deep learning underwent another dramatic transformation. The emergence of the AlexNet, a deep convolutional neural network, led to a breakthrough in image classification tasks during the ImageNet competition. Achieving a staggering reduction in error rates compared to its predecessors, AlexNet harnessed the power of GPUs to facilitate large-scale training on vast datasets. This achievement ignited a deep learning renaissance, captivating both researchers and industrial practitioners around the globe.

The rapid advancement of deep learning technology was further fueled by three critical factors:

- The Availability of Big Data: With the explosion of data generated daily—from social media interactions to the Internet of Things—deep learning models thrived on ample data, refining their accuracy and learning capabilities.

- Enhanced Computational Power: The availability of Graphics Processing Units (GPUs) and specialized hardware like Tensor Processing Units (TPUs) has drastically reduced the time required for training deep learning models, making real-time analysis and decision-making feasible.

- Open Source Frameworks: Platforms such as TensorFlow, PyTorch, and Keras democratized access to powerful tools for building neural networks, enabling developers and researchers—regardless of their backgrounds—to experiment with and deploy deep learning applications easily.

Notably, the subfields of deep learning began to diversify. The rise of Recurrent Neural Networks (RNNs) and their variants, such as Long Short-Term Memory (LSTM) networks, fueled advancements in sequential data processing. RNNs became instrumental in tasks requiring context awareness, leading to significant breakthroughs in applications ranging from language translation to sentiment analysis. Similarly, Generative Adversarial Networks (GANs) introduced a novel approach to synthesizing new data, challenging conventional boundaries in art and design, as AI-generated images and music began to emerge.

The impact of deep learning extends beyond academia into industry, fundamentally disrupting business models. Companies like Google, Facebook, and Tesla harness deep learning for personalized recommendations, autonomous driving, and advanced customer interactions through chatbots, all highlighting its capacity to revolutionize service delivery and operational efficiency.

As we reflect on the trajectory of neural networks from simple perceptrons to cutting-edge deep learning models, it becomes evident that this journey has not only expanded our technical understanding but has also equipped us with tools that dramatically reshape our world. Continuous investments in research and the refinement of techniques signal the ongoing evolution, promising even more profound advancements in the near future.

DIVE DEEPER: Click here to uncover the latest trends and challenges

Conclusion: The Future of Neural Networks

The evolution of neural networks from simple perceptrons to sophisticated deep learning models marks a transformative journey in the realm of artificial intelligence. This progression represents not just a refinement of techniques but a fundamental shift in how machines understand and interact with the world. Each breakthrough, from the early days of single-layer networks to contemporary architectures like transformers, has paved the way for unparalleled advancements in various sectors including healthcare, finance, and entertainment.

The rise of deep learning has been catalyzed by pivotal factors such as the availability of big data, enhanced computational power, and the democratization of AI through open-source frameworks. These developments have enabled rapid experimentation and innovation, making it easier for a diverse group of researchers and developers to contribute to this exciting field. As we witness incredible applications—from language generation and facial recognition to autonomous systems—it is evident that deep learning is not merely a tool; it is reshaping the fabric of our daily lives.

Looking ahead, the future of neural networks is brimming with potential. Engaging in responsible research and ensuring ethical considerations will be paramount as these technologies continue to evolve. The exploration of new architectures, coupled with advancements in explainable AI and edge computing, will likely redefine our approach to AI challenges. As we stand at this crossroads, one thing is clear: the ongoing evolution of neural networks will not only propel technological progress but also unlock new realms of human understanding and capability.