The Evolution of Intelligent Agents

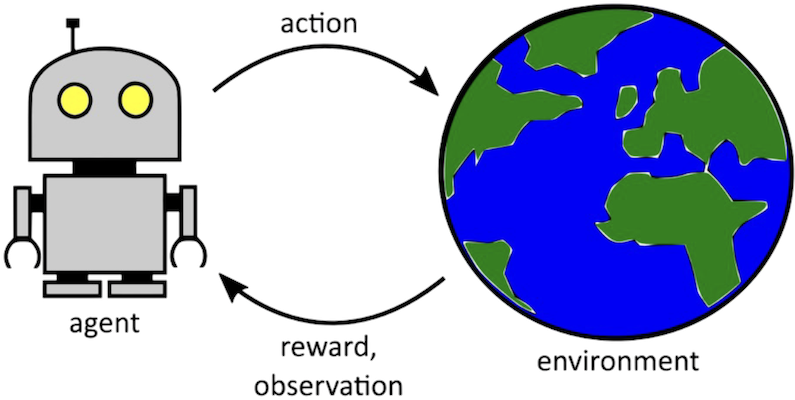

The rise of intelligent agents in autonomous decision-making has revolutionized various aspects of our daily lives, but it also poses significant ethical dilemmas. As we integrate these sophisticated systems into critical areas such as transportation and healthcare, the decisions they make carry heavy implications for individuals and society as a whole.

Autonomous Vehicles

Consider autonomous vehicles, which promise to enhance road safety and reduce traffic fatalities. However, the question arises: what happens during an unavoidable crash? The AI must make split-second decisions that could lead to life or death outcomes. This scenario reflects the ethical dilemma often termed the “trolley problem,” where one must choose between the lesser of two evils. Should an autonomous vehicle prioritize the lives of its passengers over pedestrians, or vice versa? This complexity remains a significant concern, as decisions made by these intelligent systems challenge the moral values we uphold in human interactions.

Healthcare AI

The application of AI in the healthcare sector also raises profound questions. Algorithms that determine treatment protocols may optimize for efficiency or cost-effectiveness, potentially sidelining unique patient needs. For instance, an AI might recommend a standardized treatment protocol that overlooks individual medical histories or personal preferences, leading to subpar patient care. Furthermore, the use of AI in triaging patients can prioritize cases based on statistical models rather than the holistic understanding that comes from a human practitioner. As such, the need for ethical guidelines becomes evident in managing these decisions effectively.

Surveillance and Privacy

Surveillance systems powered by AI also bring forth ethical issues related to privacy and civil liberties. Intelligent surveillance can enhance public safety, but the methods employed can intrude on individual freedoms. For example, facial recognition technology may help identify criminals, but its deployment raises critical questions about consent, data protection, and the potential for misuse. How transparent are these systems? Are citizens aware of their use, and do they have any control over how their data is processed and stored? These issues create a challenging landscape that necessitates careful regulation.

Ethical Considerations in AI Accountability

A pivotal aspect of the dialogue around intelligent agents is accountability. When an AI system makes a harmful decision, who should be held responsible—the developers who created the AI, the users who implemented it, or the AI itself? The complexity here challenges existing legal frameworks, leading to calls for new legislation that can adequately address these issues.

- Accountability: Clarifying accountability in AI decisions can influence future developments and public trust.

- Transparency: The demand for transparency in AI decision-making processes is gaining momentum, with advocates calling for systems that can provide understandable justifications for their choices.

- Bias: It is critical to implement measures that prevent biases in AI algorithms, given that biased data can lead to discriminatory outcomes in important areas such as hiring or law enforcement.

As we navigate this complex intersection of technology and morality, the discussion surrounding intelligent agents and ethics is more important than ever. The decisions made by these systems today will shape the future of technology and society. Engaging with these pressing issues is not just an academic exercise; it is a necessary step as we tread further into an era where intelligent agents increasingly influence our lives.

DIVE DEEPER: Click here to discover more

Challenges in Ethical Frameworks for Intelligent Agents

The integration of intelligent agents into daily decision-making processes necessitates a reevaluation of long-standing ethical frameworks. As these systems become increasingly autonomous, their decision-making capabilities raise profound questions about morality, accountability, and societal values. The ethical challenges arise not just from how these agents operate but also from their inescapable impact on human lives.

The Limitation of Existing Ethical Models

Traditional ethical models, such as utilitarianism and deontology, struggle to address the complexities posed by intelligent agents. Utilitarianism, which advocates for actions that maximize overall happiness, may lead an AI to make decisions that prioritize efficiency over individual rights. For instance, an AI managing public resources might allocate funds in a way that benefits the majority, potentially neglecting marginalized groups. Alternatively, a deontological approach that emphasizes duty and rules may restrict an AI’s ability to adapt in situations requiring flexibility and nuanced judgments.

The Dilemma of Moral Algorithms

As intelligent agents are designed to mimic human decision-making, they often rely on algorithms that embody specific ethical standards. However, determining which morals to encode into these algorithms is fraught with contention. Should AI prioritize human life above all else, or should it consider broader societal impacts? This ethical programming dilemma raises questions: who decides the values these agents will uphold, and how can we ensure that these values reflect a diverse spectrum of human experience?

Implementing Ethical Guidelines

The pressing need for structured ethical guidelines in the development and deployment of intelligent agents cannot be overstated. Several initiatives have emerged, advocating for the establishment of frameworks that ensure these systems operate within defined moral boundaries. Key areas of focus include:

- Bias Mitigation: Developing protocols to identify and rectify biases within algorithms is essential. Biased data can inadvertently lead to discriminatory practices in various fields, including healthcare and law enforcement.

- Transparency Standards: Advocates urge for greater transparency in how intelligent agents make decisions. This involves the creation of systems that provide understandable justifications for their choices, fostering trust among users.

- Integrated Accountability Measures: Establishing clear lines of accountability for autonomous decisions is critical. This can guide policymakers in delineating the responsibility of developers, users, and the technology itself in the event of an unfavorable outcome.

As the landscape of autonomous decision-making evolves, the ethical implications of incorporating intelligent agents must be a paramount focus. The decisions made by these systems will shape how society perceives and interacts with technology, pressing us to reflect on the moral principles that govern our shared existence. Moreover, as we forge ahead, the conversation surrounding ethical standards will be vital in ensuring that these intelligent agents serve humanity with integrity and respect.

| Advantages | Description |

|---|---|

| Improved Decision Making | Autonomous systems leverage data analytics to enhance accuracy in decision-making processes. |

| Ethical Framework Development | These intelligent agents can help define new ethical standards for complex scenarios where human moral reasoning may falter. |

| Increased Efficiency | Automation streamlines processes, reducing human error and increasing speed in operations. |

| Adaptability | Intelligent agents continuously adapt to new information, allowing for real-time ethical decision adjustments. |

As the integration of intelligent agents into various sectors continues to evolve, questions about ethical frameworks and their implications in autonomous decision-making systems become increasingly pertinent. One significant advantage lies in their ability to improve the accuracy of decisions made under pressure, minimizing the risk of human error. Moreover, as these agents analyze vast datasets, they pave the way for developing ethical standards that could guide human operators, especially in sensitive environments such as healthcare or autonomous vehicles.Moreover, the efficiency gained from these systems should not be underestimated. While leveraging technology to streamline processes and minimize errors, organizations can benefit from adaptability—agents that learn and update their parameters based on real-time data. This agility is crucial in contexts where ethical considerations are continuously evolving, making it essential for engineers and ethicists alike to work collaboratively.In conclusion, the future of intelligent agents in autonomous decision-making holds promise, as long as the profound ethical implications are diligently explored.

DISCOVER MORE: Click here to delve deeper

Redefining Accountability in Autonomous Systems

As intelligent agents grow in autonomy, the question of accountability becomes increasingly complex. When autonomous systems make decisions that result in negative outcomes, who is held responsible? The developers who wrote the algorithms, the organizations that deployed the agents, or the agents themselves? This ambiguity can obscure moral responsibility, complicating legal and ethical recourse for victims. Recent incidents, like autonomous vehicle accidents, highlight the urgent need for an ethical framework that delineates accountability, ensuring that developers and organizations maintain responsibility for their creations.

The Role of Regulatory Bodies

To tackle these challenges, many experts advocate for the establishment of regulatory bodies specifically focused on the governance of intelligent agents. Such organizations can facilitate collaboration between technologists, ethicists, and policymakers to create cohesive standards that ensure compliance with ethical norms. For example, the European Union is actively developing regulations surrounding artificial intelligence that mandate transparency and accountability in intelligent agents. By proactively addressing these issues, regulatory bodies can create guidelines that protect consumers and ensure that technological advances align with societal values.

Public Involvement and Ethical Discourse

A critical factor in developing ethical guidelines is the involvement of the public. The increasing reliance on intelligent agents to make significant life decisions means that diverse community perspectives should inform ethical standards. Public forums, surveys, and collaborative workshops can serve as platforms for discussion, allowing citizens to voice their values and concerns. Engaging the public not only enriches the discourse around intelligent agents but also fosters trust in these systems. For instance, initiatives like the AI Ethics Lab in San Francisco host discussions aimed at demystifying AI and soliciting public input on ethical practices, exemplifying how community involvement can influence the ethical direction of technology.

Advancements in Explainable AI

One of the promising avenues for addressing ethical concerns in autonomous decision-making is the development of explainable AI (XAI). XAI seeks to make the decision-making processes of intelligent agents more transparent and understandable to humans. By providing insights into how decisions are made, XAI can help users trust the systems they interact with. Research indicates that when users understand the rationale behind an agent’s decision, they are more likely to accept its outcomes, even if those outcomes are unfavorable. For example, in healthcare, an AI diagnosing treatments can communicate its reasoning, potentially improving patient trust and engagement.

International Cooperation on Ethical Standards

As intelligent agents cross borders, the ethical implications of their use require global cooperation. International organizations, like the United Nations, are advocating for global ethical standards for AI. By fostering cooperation among nations, it becomes possible to establish baseline ethical practices while considering cultural differences. For example, the UNESCO Recommendation on the Ethics of AI calls for creating frameworks that promote inclusiveness, justice, and diversity in AI development. Such collaborative initiatives can establish common ground, allowing nations to work together in navigating the moral landscape of intelligent agents.

In addressing the intricate ethical landscape arising from the integration of intelligent agents into autonomous decision-making, multi-faceted approaches are essential. From regulatory frameworks to community engagement and international cooperation, the pursuit of ethical integrity in technology requires collective effort. As we move forward into this uncharted domain, continuous dialogue and proactive initiatives will be pivotal in shaping a future where intelligent agents operate within a clearly defined ethical framework.

LEARN MORE: Click here to dive deeper

Conclusion: Navigating the Ethical Future of Intelligent Agents

The integration of intelligent agents into autonomous decision-making presents both exciting opportunities and formidable challenges. As these technologies evolve, the ethical implications must be carefully navigated to ensure they serve humanity rather than undermine it. The complexity of accountability in scenarios involving autonomous systems necessitates a robust ethical framework that clearly delineates responsibilities among developers, organizations, and the intelligent agents themselves. By establishing regulatory bodies, fostering public involvement, and promoting advancements in explainable AI, we can begin to construct a landscape where ethical practices are prioritized.

The call for international cooperation in developing global ethical standards is increasingly vital as intelligent agents transcend borders. By creating cohesive guidelines that respect cultural differences while promoting inclusivity and fairness, we can harness the potential of AI for the common good. Engaging diverse voices in ethical discussions enhances public trust, encouraging collaboration in shaping the path forward.

Moving ahead, it is imperative to maintain an ongoing dialogue that considers new advancements and their implications on society. As we stand on the threshold of an era marked by autonomous decision-making, a commitment to ethical integrity will pave the way for a future where intelligent agents contribute positively to our lives, aligning technological progress with our core human values.